How do you ensure the stability of your Kubernetes (K8s) clusters? How do you know that your manifests are syntactically valid? Are you sure you don't have any invalid data types? Are any mandatory fields missing?

Most often, we become aware of these misconfigurations only at the worst time: when we're trying to deploy the new manifests.

Specialized tools and a "shift-left" approach make it possible to verify a Kubernetes schema before it's applied to a cluster. This article addresses how you can avoid misconfigurations and which tools are the best to use.

TL;DR

Running schema validation tests is important, and the sooner the better. If all machines (e.g., local developer environments, continuous integration [CI], etc.) have access to your Kubernetes cluster, run

kubectl --dry-runin server mode on every code change. If this isn't possible and you want to perform schema validation tests offline, use kubeconform together with a policy-enforcement tool to have optimal validation coverage.

Schema-validation tools

Verifying the state of Kubernetes manifests may seem like a trivial task because the Kubernetes command-line interface (CLI), kubectl, can verify resources before they're applied to a cluster. You can verify the schema by using the dry-run flag (--dry-run=client/server) when specifying the kubectl create or kubectl apply commands; these will perform the validation without applying Kubernetes resources to the cluster.

But I can assure you that it's actually more complex. A running Kubernetes cluster must obtain the schema for the set of resources being validated. So, when incorporating manifest verification into a CI process, you must also manage connectivity and credentials to perform the validation. This becomes even more challenging when dealing with multiple microservices in several environments (e.g., prod, dev, etc.).

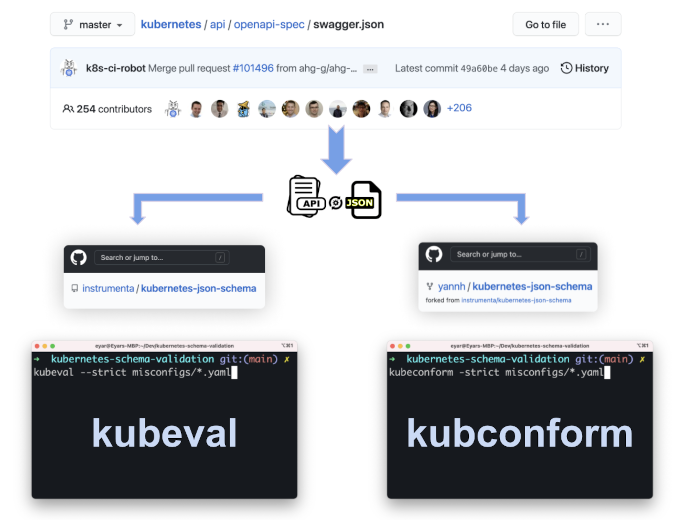

Kubeval and kubeconform are CLI tools developed to validate Kubernetes manifests without requiring a running Kubernetes environment. Because kubeconform was inspired by kubeval, they operate similarly; verification is performed against pre-generated JSON schemas created from the OpenAPI specifications (swagger.json) for each Kubernetes version. All that remains to run the schema validation tests is to point the tool executable to a single manifest, directory or pattern.

(Eyar Zilberman, CC BY-SA 4.0)

Comparing the tools

Now that you're aware of the tools available for Kubernetes schema validation, let's compare some core abilities—misconfiguration coverage, speed tests, support for different versions, Custom Resource Definitions support, and docs—in:

- kubeval

- kubeconform

- kubectl dry-run in client mode

- kubectl dry-run in server mode

Misconfiguration coverage

I donned my QA hat and generated some (basic) Kubernetes manifest files with some intentional misconfigurations and then ran them against all four tools.

| Misconfig/Tool |

kubeval / |

kubectl dry-run |

kubectl dry-run |

|---|---|---|---|

| API deprecation | ✅ Caught | ✅ Caught | ✅ Caught |

| Invalid kind value | ✅ Caught | ❌ Didn't catch | ✅ Caught |

| Invalid label value | ❌ Didn't catch | ❌ Didn't catch | ✅ Caught |

| Invalid protocol type | ✅ Caught | ❌ Didn't catch | ✅ Caught |

| Invalid spec key | ✅ Caught | ✅ Caught | ✅ Caught |

| Missing image | ❌ Didn't catch | ❌ Didn't catch | ✅ Caught |

| Wrong K8s indentation | ✅ Caught | ✅ Caught | ✅ Caught |

In summary: all misconfiguration was caught by kubectl dry-run in server mode.

Some misconfigurations were caught by everything:

- Invalid spec key: Caught successfully by everything!

- API deprecation: Caught successfully by everything!

- Wrong k8s indentation: Caught successfully by everything!

However, some had mixed results:

- Invalid kind value: Caught by Kubeval / Kubeconform but missed by Kubectl client.

- Invalid protocol type: Caught by Kubeval / Kubeconform but missed by Kubectl client.

- Invalid label value: Missed by both Kubeval / Kubeconform and Kubectl client.

- Missing image: Missed by both Kubeval / Kubeconform and Kubectl client.

Conclusion: Running kubectl dry-run in server mode caught all misconfigurations, while kubeval/kubeconform missed two of them. It's also interesting to see that running kubectl dry-run in client mode is almost useless because it's missing some obvious misconfigurations and also requires a connection to a running Kubernetes environment.

- All the schemas validation tests were performed against Kubernetes version 1.18.0.

- Because kubeconform is based on kubeval, they provide the same result when run against the files with the misconfigurations.

- kubectl is one tool, but each mode (client or server) produces a different result (as you can see from the table).

Benchmark speed test

I used hyperfine to benchmark the execution time of each tool. First, I ran it against all the files with misconfigurations (seven files in total). Then I ran it against 100 Kubernetes files (all the files contain the same config).

Results for running the tools against seven files with different Kubernetes schema misconfigurations:

Results for running the tools against 100 files with valid Kubernetes schemas:

Conclusion: While kubeconform (#1), kubeval (#2), and kubectl --dry-run=client (#3) provide fast results on both tests, kubectl --dry-run=server (#4) is slower, especially when it evaluates 100 files. Yet 60 seconds for generating a result is still a good outcome in my opinion.

Kubernetes versions support

Both kubeval and kubeconform accept the Kubernetes schema version as a flag. Although both tools are similar (as mentioned, kubeconform is based on kubeval), one of the key differences is that each tool relies on its own set of pre-generated JSON schemas:

- Kubeval: instrumenta/kubernetes-json-schema (last commit: 133f848 on April 29, 2020)

- Kubeconform: yannh/kubernetes-json-schema (last commit: a660f03 on May 15, 2021)

As of May 2021, kubeval supports Kubernetes schema versions only up to 1.18.1, while kubeconform supports the latest Kubernetes schema available, 1.21.0. With kubectl, it's a little bit trickier. I don't know which version of kubectl introduced the dry run, but I tried it with Kubernetes version 1.16.0 and it still worked, so I know it's available in Kubernetes versions 1.16.0–1.18.0.

The variety of supported Kubernetes schemas is especially important if you want to migrate to a new Kubernetes version. With kubeval and kubeconform, you can set the version and start evaluating which configurations must be changed to support the cluster upgrade.

Conclusion: The fact that kubeconform has all the schemas for all the different Kubernetes versions available—and also doesn't require Minikube setup (as kubectl does)—makes it a superior tool when comparing these capabilities to its alternatives.

Other things to consider

Custom Resource Definition (CRD) support

Both kubectl dry-run and kubeconform support the CRD resource type, while kubeval does not. According to kubeval's docs, you can pass a flag to kubeval to tell it to ignore missing schemas so that it will not fail when testing a bunch of manifests where only some are resource type CRD.

Documentation

Kubeval is a more popular project than kubeconform; therefore, its community and documentation are more extensive. Kubeconform doesn't have official docs, but it does have a well-written README file that explains its capabilities pretty well. The interesting part is that although Kubernetes-native tools such as kubectl are usually well-documented, it was really hard to find the information needed to understand how the dry-run flag works and its limitations.

Conclusion: Although it's not as famous as kubeval, the CRD support and good-enough documentation make kubeconform the winner, in my opinion.

Strategies for validating Kubernetes schema using these tools

Now that you know the pros and cons of each tool, here are some best practices for leveraging them within your Kubernetes production-scale development flow:

- ⬅️ Shift-left: When possible, the best setup is to run

kubectl --dry-run=serveron every code change. You probably can't do that because you can't allow every developer or CI machine in your organization to have a connection to your cluster. So, the second-best effort is to run kubeconform. - ? Because kubeconform doesn't cover all common misconfigurations, it's recommended to run it with a policy enforcement tool on every code change to fill the coverage gap.

- ? Buy vs. build: If you enjoy the engineering overhead, then kubeconform + conftest is a great combination of tools to get good coverage. Alternatively, there are tools that can provide you with an out-of-the-box experience to help you save time and resources, such as Datree (whose schema validation is powered by kubeconform).

- ? During the CD step, it shouldn't be a problem to connect with your cluster, so you should always run

kubectl --dry-run=serverbefore deploying your new code changes. - ? Another option for using kubectl dry-run in server mode, without having a connection to your Kubernetes environment, is to run Minikube +

kubectl --dry-run=server. The downside of this hack is that you must also set up the Minikube cluster like prod (i.e., same volumes, namespace, etc.), or you'll encounter errors when trying to validate your Kubernetes manifests.

Thank you to Yann Hamon for creating kubeconform—it's awesome! This article wouldn't be possible without you. Thank you for all of your guidance.

This article originally appeared on Datree.io and is reprinted with permission.

Comments are closed.